Broadway Automation

July 7, 2014HTML5 Robot

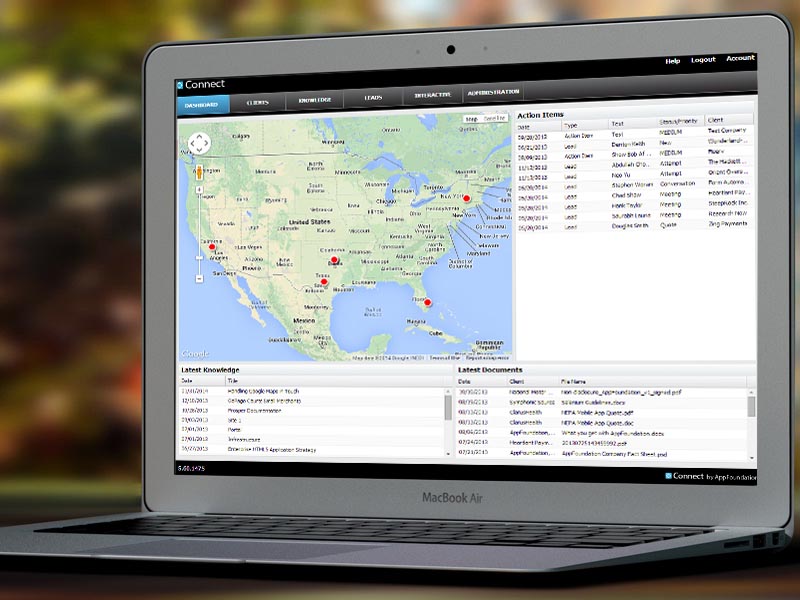

July 7, 2014Case Study: Connect

Background

For design companies managing the assets of visually rich applications during design can be challenging. This is especially true with collocated teams across multiple clients. How do you effectively manage all of these assets, new projects, and potential projects?

Design & Refine

The Connect project started out as a question:

How do application design teams connect across time and distance?

This question resulted in discussions, mind-maps, and eventually drawings on paper of potential solutions. Solutions ranged from using existing cloud based solutions to standing up new systems. Ultimately the driving force was the need to integrate several pieces of functionality, which while they existed independently did not exist in a single system. This system would need to run on any cloud based infrastructure or internally, and would need to be fast, easy to use, and easy to administer.

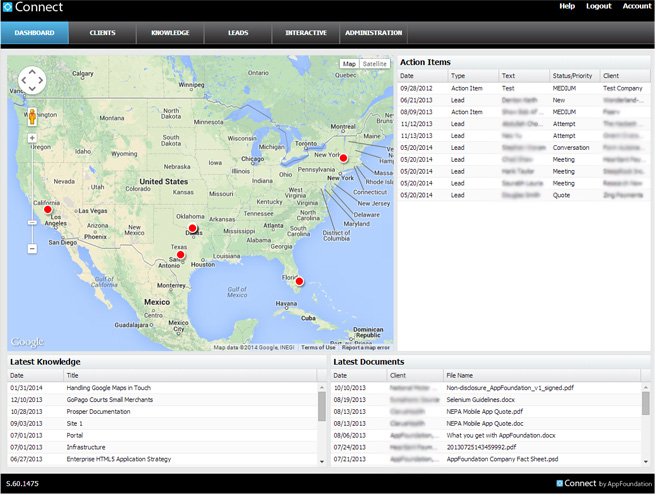

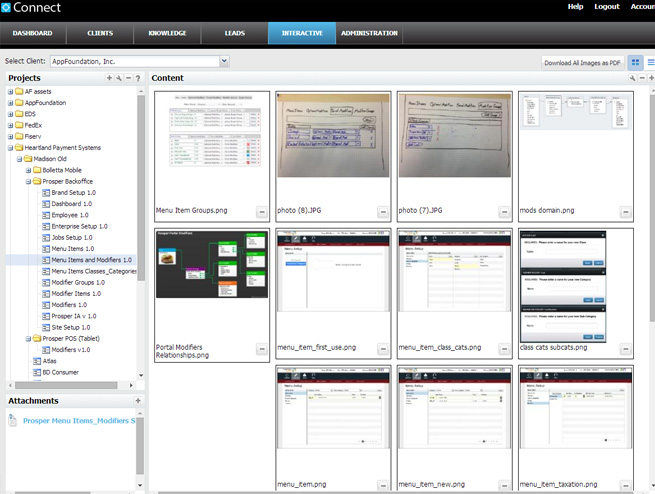

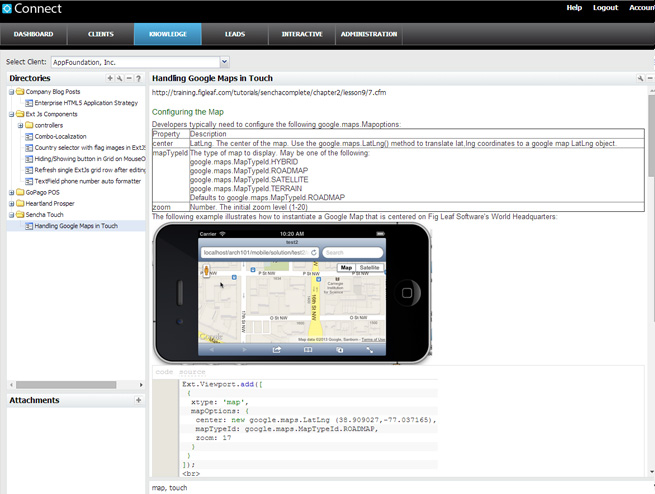

Architecturally the resulting system was best described as web portal, consisting of the standard three components: Front-end for presenting information to the user, a Mid-tier for processing information, and a Database for persistently storing information. The front-end was Ext JS because of its rich catalog of available components, along with well established community and history of success. The Mid-tier was Java-Spring because of its enterprise scalability, ease of use, and established best practices. For the Database we used MySQL as a lightweight and low cost solution for relational persistent storage, and MongoDb as a NoSQL solution for storing documents and images.

An existing continuous integration and continuous delivery framework and infrastructure allowed us to quickly standup and run the initial project, and then start building the system component by component and task by task. Work was categorized by overall screen, which focused on functionality from the enduser perspective. As each screen came online, quality assurance would be immediately involved. Tasks, defects, and new feature were all tracked in a single system, and were generally prioritized weekly by a combination of developers, testers, and the business. The goal was to meet initial deliverable deadlines, which required moving work in and out of the different targets in the release schedule according to priority.

Deliver

Through continuous integration, every change resulted in unit testing, integration, and acceptance testing. Unit testing would verify code at the function level, integration testing would verify server-side functionality from the endpoint level, and acceptance testing would verify system behavior from a user perspective by executing automated tests in the web browser via HTML5 Robot. This allowed us to continually change the overall system while being assured that we were not breaking existing functionality, and the the front-end remained working in all supported web browsers. This level of stability also gave us enough confidence to be able to automatically deploy the system to the quality assurance, user acceptance testing, and production environments, sometimes multiple times per day.